Distributed Dataset Mapping for 39699187, 965348925, 645753932, 8061867443, 2112004371, 954040269

Distributed dataset mapping for identifiers such as 39699187 and 965348925 presents unique challenges in ensuring data consistency across various platforms. This process relies on effective normalization techniques and robust mapping algorithms to maintain integrity. Furthermore, the generation of unique keys is critical to avoid conflicts. Understanding these elements can significantly influence operational efficiency and decision-making. The implications of these practices warrant further exploration to uncover their full potential.

Understanding Distributed Datasets

Distributed datasets represent a fundamental aspect of modern data management, characterized by their partitioning across multiple locations or systems.

Their data structure facilitates efficient access and processing, enabling organizations to achieve enhanced dataset scalability.

By distributing data, systems can optimize resource utilization, ensuring flexibility and resilience.

This approach allows for the seamless integration of diverse data sources while maintaining high performance and availability.

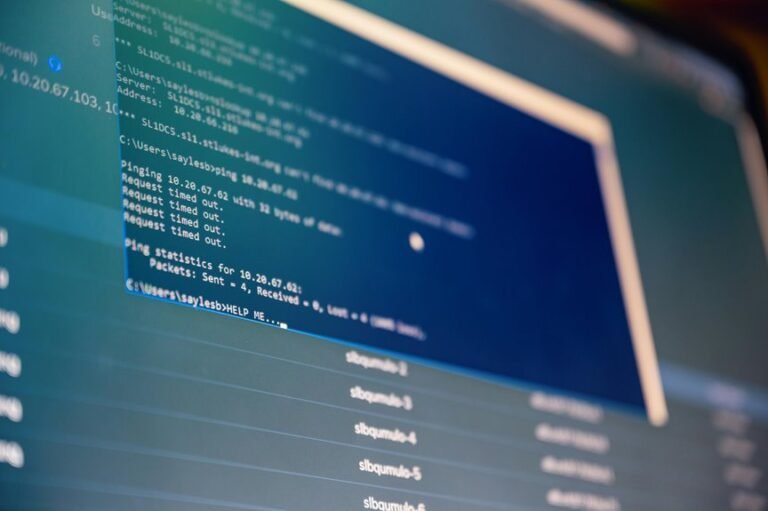

Techniques for Mapping Identifiers

Effective management of distributed datasets necessitates robust techniques for mapping identifiers across various nodes and systems.

Identifier normalization and mapping algorithms play critical roles in ensuring data integrity and dataset consistency. Unique key generation is essential for preventing conflicts, while identifier reconciliation facilitates the alignment of disparate data sources.

Together, these techniques enhance the overall efficiency and reliability of distributed dataset management processes.

Technologies Supporting Dataset Management

As organizations increasingly rely on data-driven decision-making, various technologies have emerged to support efficient dataset management.

Key innovations include data integration tools that streamline workflows, cloud storage solutions ensuring scalability, and robust data governance frameworks that uphold data lineage and privacy compliance.

Additionally, machine learning algorithms enhance predictive analytics, fostering deeper insights while enabling organizations to navigate complex datasets with agility and precision.

Best Practices for Data Accessibility

Accessibility remains a critical cornerstone of effective data management practices, enabling organizations to leverage their datasets fully.

Adopting data democratization principles fosters user empowerment, ensuring that diverse users can access information seamlessly.

Implementing robust accessibility standards and inclusive design enhances user experience.

Effective metadata management and promoting open data initiatives further facilitate comprehensive access, reflecting a commitment to equity and transparency in data utilization.

Conclusion

In conclusion, the intricate web of distributed dataset mapping reveals the necessity for meticulous normalization and robust reconciliation techniques. As organizations traverse this digital landscape, employing advanced data integration tools becomes akin to navigating a complex maze; each turn must be calculated to avoid conflicts and enhance efficiency. Ultimately, embracing these best practices not only empowers informed decision-making but also fortifies operational agility, allowing organizations to thrive amidst the ever-evolving data ecosystem.